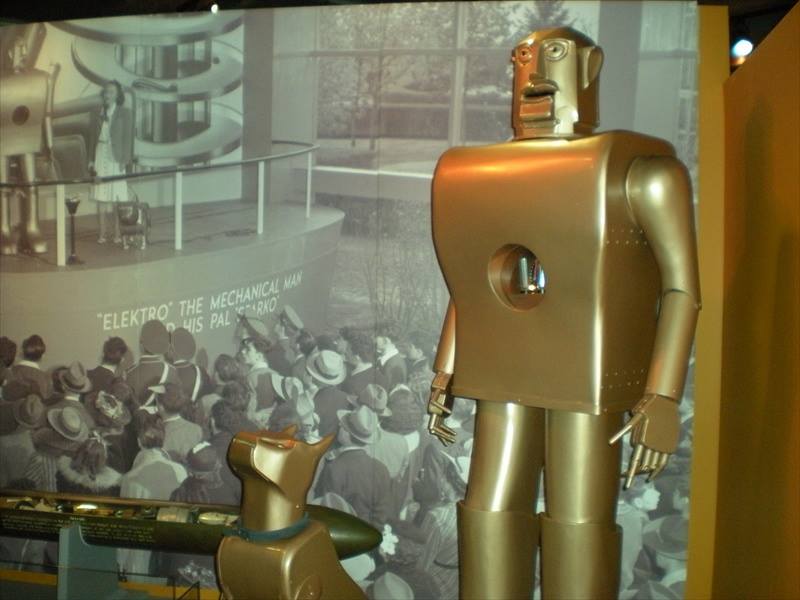

In 1939, one of the first robots, Elektro, was created in Pittsburgh by the erstwhile Westinghouse Electric Corporation. Elektro performed basic actions – moved arms and legs, responded to human command and smoked a cigarette. The world was in awe. Soon stories, novels and movies of robots appeared, many of them portraying the doomsday due to the creation of robots. Elektro was created around the same time when computers – analog and with cathode ray tubes – were being developed. The world waited in anticipation for robots and computers to evolve as the second homo-sapiens. Computers succeeded in connecting the world while robots succeeded in reducing labour and automating mundane tasks, however when it came to taking decisions on their own, the results have not been that encouraging.

Several years later and more recently, when google launched its self-drive car, the first reaction of many were “oh, is it really possible?”. Driving needed dynamic decisions – based on rules, location, traffic and behaviour of other commuters. Google self-drive car relies heavily on the assumption that traffic rules are being followed – it takes into consideration the white central markers and the yellow markers on center and side of the road as a basic input. In the US, most of the drivers follow traffic rules and hence the assumption that the car can follow the white divider marker and keep a safe distance from the other cars, while expecting the other human driven cars to do the same. Despite all the obedience to traffic, we have seen self-drive cars fail. And put that car in India, and in a few seconds, all the advanced data analytics and robo-logic will go bonkers.

We often forget that from Elektro to Google Cars, there is a human behind the machine and while a human tries her best to put in as many parameters as possible into the computer chip to emulate the human brain, it still cannot turn a machine into a human being. Computers till today have made calculations faster, and given pre-conditioned decisions. They have not evolved to learn decisions on their own, at least not yet.

Anything that has advanced computing and technology catches the attention of the public. In India, robo-financial advisory is the new buzz in town. The promotion of such an idea is hinged on the assumption that human bias can be eliminated when machines take over financial advisory decisions. Of course, it could be. Unfortunately, what people forget is that the robot – or automated computing – simply follows human instructions. It is a human feeding an algorithm that takes assumptions and parameters to execute the instructions. The only bias that perhaps the algorithm eliminates is the human tendency to cheat, which unfortunately can be programmed.

Financial journey is like traffic on Indian roads – it is never a straight path and neither can you predict everything. An automated investment system can best take in several macro-economic factors, the current market conditions fed in by a human, and then churn out decisions. In some cases, the system provides a list of possible actionable items and then there are human experts who may decide the course of action.

To understand if robo-advisors can eliminate bias, let’s take a look at some of the bias a human being may be subjected to while making a financial decision.

- Recency effect –The fact that something happened recently makes us take irrational decisions. An air accident, which is rare, makes us believe that the next accident will also be soon and there are passengers, crew and authorities taking more than normal measures to make sure an accident does not reoccur. However, after a few days, when the memories of the accident fades, everything is back to normal. In the stock market, when a bull run is in full booming stage, many retail investors put in their money. Or say if someone makes, due to stroke of luck, a profit in an intraday trade, the person is highly likely to do an intraday for the next few days until she loses her entire capital.

Unfortunately, recency is one of the parameters in most trading and investment systems. Yes, how else will you tell the computer that the economy is booming! Whether it be day trade or fundamental analysis, an estimate of future stock price will be dependent on a set of rising or falling parameters that would land up in a computer code to compute a decision.

- Choice paralysis- We may not take action simply due to the number of choices available to us. Say, Equity diversified mutual fund to buy? Or which Life Insurance policy to buy, when all policies appear to be giving the same benefits and similar service. An automated system may help us get away from this bias. But how will the system decide? What has the computer programmer written inside the code that the human brain cannot decide? The answer could be as simple as whichever company has paid more advertisement money!

- Herd effect – We humans feel like doing what others are doing in the assumption that the majority cannot be wrong. This again, for trading systems, becomes an input parameter for an automated system. Is the market rising? Are the volumes high? This means that the majority feels that the “market” is rising.

- Confirmation Bias- Looking for data that supports your thoughts. In a trading system, if the market has fallen and if the moving averages are pointing down, you would typically see that that a confirmation of the market falling down, while overlooking other indicators that may not necessarily indicate a down trend.

- Optimism bias – Humans, we are eternal optimists. Ask an entrepreneur when she starts a business. And ask her again after a year when she has lost her money and her investors’ money. We all believe that in F&O trade we can hit the right option, we can make the best stock call and we can bet on the next Infosys. And how do computer programs avoid this bias. Well, one of the bias that a trading system actually

The last 2 biases are essentially linked to an emotional factor in humans. Since computers do not have emotions, the belief is that robo-advisory is better than human advisory. Nothing can be far from the truth. Emotional decisions, whether good or bad, make humans arrive at a decision. How would a computer decide what course of action is the best? Most likely, the decision for the computer would be predefined in the commercial sense. Ie, show the product of a sponsor. As a customer you are expecting an answer from the computer, if you are shown five products that are equal in features then a customer loses her confidence on the robo-system. Hence, to confer to the bias that the customer is expecting, the computer will show a decision – and often by commercial motives.

It is not that robo-advisory will never be useful. Computers have the advantage in crunching data. The most powerful output of robo-adviory is the generation of multiple options for the investor as well as for the advisor so that mathematics is taken off and financial needs are focused on. With several models running on the computer, the computer can generate plenty of “What If” scenarios that can help the investor and the financial advisor to make more informed decisions.